Why do businesses need multi-cloud?

The most common trend for organizations in the past has been to move their on-premise workloads to the cloud. This provided the organizations with a lot more agility in terms of scaling, as needed, without worrying about complex procurement processes mandated by their data centers.

A lot has changed since CapEx vs OpEx was the topic of discussions regarding cloud adoption. Organizations have found their cloud of choice, and migrated legacy workloads to the cloud. While many applications are newly developed or redesigned in a fully cloud native fashion, some companies have chosen the strategy to “lift and shift” onto the cloud.

An architect has a number of options and attributes to consider in making their choice among what the market offers in public cloud computing:

.png?width=750&height=501&name=image%20(37).png)

Serverless or app services would appear to be the most effective choice for computing (from a developer point of view) when the need is to build a number of functionalities contained within a simple application. With minimal knowledge required for DevOps or hosting, a programmer can quickly set up an application, just by referencing the provided documentation regarding the components.

However, as the organization and the application begin to grow in scope, scale, and transaction volume, the hosting costs will also rise notably. At this point, the organization can find itself with limited options for optimization, whether it would be migrating to another cloud or distributing across multiple clouds, due to the tight dependencies and reliance on their current cloud provider.

IVirtual machines, on the other hand, provide a wide range of flexibility for system engineers to explore and configure anything that is needed. However, the overhead costs of administration and operations to manage those instances would increase significantly with scaling.

Hence, for an organization with hundreds of microservice apps and high transaction volumes, or for companies looking to grow quickly in this scale, Container based architectures would provide the most practical structure, allowing for a balance of flexibility, portability and affordability. This would also provide the efficiency needed for further growth and expansion to a multi-cloud solution, which ultimately can bring about the following benefits:

-

Competitive pricing

-

High degree of resilience

-

Higher availability

-

Flexibility during security events

-

Closer proximity to users and better performance

-

Easier risk management options

Strategy

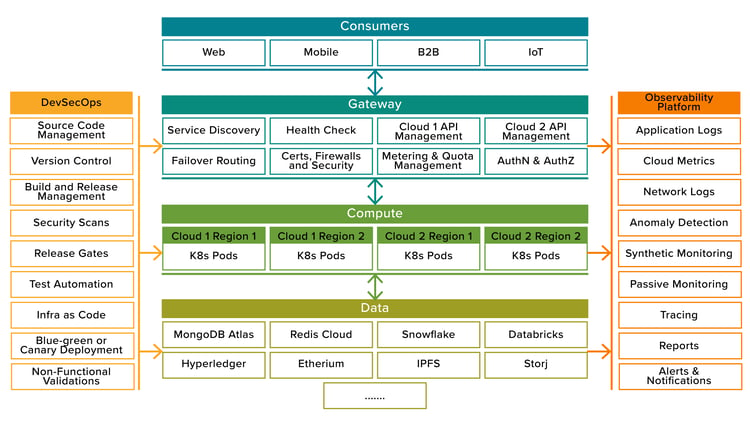

Choosing the compute service - The sweet spot for computing in a larger size organization with complex applications is in a cloud configuration using containers. By choosing a container orchestration engine like Kubernetes, which has equivalent services with every public cloud provider, the containerized application can be deployed to any cloud. “Build once, deploy anywhere” strategy has grown from application level(from Java times) to system level. With Kubernetes being container orchestration engine of choice, the application packaging, container runtime, K8s deploy, or service configurations, all are portable across any cloud with eligible Kubernetes services.

Data storage strategy - Deciding on the compute layer is just one element of multi-cloud strategy. Data, on the other hand, would still be within PaaS based storage on each of these cloud providers. There would be several PaaS specific offerings on the cloud which is cost effective to begin with, but locks the organization into the specific cloud. For example, Amazon’s Aurora is a RDBMS solution similar to MySQL database, however this does not offer an equivalent set of capabilities in other cloud environments. AWS Dynamodb is another such example.

Deploying a service in multiple clouds requires the data layer to be kept in sync so that the consumers see the same response, no matter which cloud is servicing them. Such design can be made possible by choosing storage services outside of the typical public cloud subscriptions.

-

Microservice applications can greatly benefit using NoSQL datastore such as MongoDB Atlas which orchestrates its services across the cloud.

-

Redis cloud provides cloud agnostic in-memory caching options.

-

Platforms such as ArangoDB provides great GraphQL interfaces, freeing applications from the worries of being tied to a specific cloud.

-

When it comes to data lakes, Snowflake, Databricks, etc., have great offerings.

-

While all these data storage options have some flavor of public cloud, Blockchain based systems, such as Etherium, Hyperledger, r3, etc., can provide even better storage options. IPFS, Storj, etc., are also options to review while building decentralized apps, purely agnostic to the cloud service provider.

While all these data storage options have some flavor of public cloud, Blockchain based systems, such as Etherium, Hyperledger, r3, etc., can provide even better storage options. IPFS, Storj, etc., are also options to review while building decentralized apps, purely agnostic to the cloud service provider.

Gateway strategy - The point of ingress for consumers is another area that requires a sound decision. Choosing a centralized API management solution from any good service provider is one option, while integrating with multiple cloud backends leveraging a set of routing policy or rules is another. This gives the ability to track and measure requests across multiple clouds, and is important in cases where monetization of APIs is needed.

While such gateways may be considered a lock-in, it is still much easier to switch over to other alternate options, as and when appropriate. The only effort required would be to port over the configurations, and not the entire servicing apps.

An alternative option for the gateway is to use specific ones on each cloud (or even at region level) and set up a service discovery such as a DNS lookup, pointing to the specified backends, based on a routing policy. The routing policy should also include a health check and failover routing records. DNS based routing will not work if all services are located under one domain. This would end up routing all services under the domain to alternate clouds in case of a failure, even in one. Therefore, such an option would also require assigning a DNS subdomain to each group of microservices for better routing flexibility.

Metrics from each cloud can be harvested with observability platforms which, in turn, can be aggregated for reporting, decisions, and actions. These actions, executed with near real-time effect, can be used to apply necessary controls, similar to that of a centralized API management solution.

Observability strategy - Cloud native monitoring tools are specific to the cloud and, in many cases, even specific to regions within the cloud. Multi-region or multi-cloud strategies need to be supported by a third party solution, such as Datadog, Prometheus, AppDynamics, Splunk, etc. These tools tap into the cloud and extract actionable metrics and logs, enabling the data to be then aggregated and leveraged for other actions. The tool or tools of choice for observability should also support open tracing and code instrumentation for detailed troubleshooting and passive monitoring. Synthetic monitoring is also an important aspect where the tool should be able to hit the health check on servers from various locations and trigger alerts in case of issues.

DevSecOps Strategy - Finally, to enable applications running on multiple clouds, a robust CI/CD pipeline is needed to apply gating conditions as needed in each – phase, code version, services, applications, test, and infra scripts along with environment specific values and secrets. The platform should provide secure deployment across all the cloud providers.

Solution

Based on the various considerations, a high level solution for multi-cloud deployment would look as follows.

Conclusion

With the considerations provided, organizations can now plan to move onto active-active multi-cloud deployments. This, not only avoids a lock-in to a cloud provider, but also provides better availability, resiliency, and a great level of flexibility with cloud cost management.